Resilience and Data Protection: Hardening Cloud Pipelines

Resilience and Data Protection in Cloud Pipelines

Reliability and Security are two fundamental pillars of the AWS Well-Architected Framework. In this fifth installment, I break down the technical safeguards implemented to transform a standard data pipeline into a resilient, production-grade infrastructure.

Building in the cloud is not just about functionality; it’s about ensuring that the system can withstand errors and protect its most valuable asset: the data.

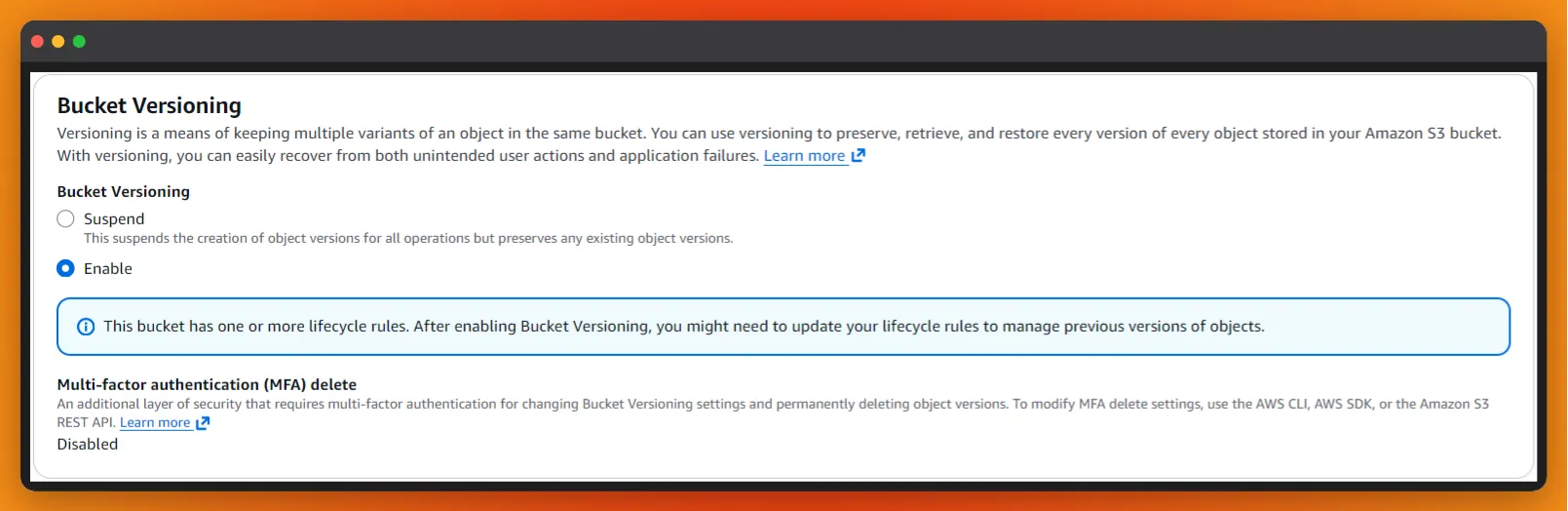

1. Implementing Data Immutability with S3 Versioning

Data loss often comes from within—accidental deletions or logic errors during updates can be more catastrophic than external threats. To mitigate this, I’ve established a strategy of Data Immutability.

The Solution: I enabled Bucket Versioning, which preserves every iteration of an object. This creates a “Zero-Loss” state where any file can be restored to its previous state instantly. This provides a critical layer of protection for high-value drone inspection data, ensuring that no human or programmatic error is permanent.

Figure 1: Protecting data integrity through Versioning, allowing for instant recovery and historical auditing.

Figure 1: Protecting data integrity through Versioning, allowing for instant recovery and historical auditing.

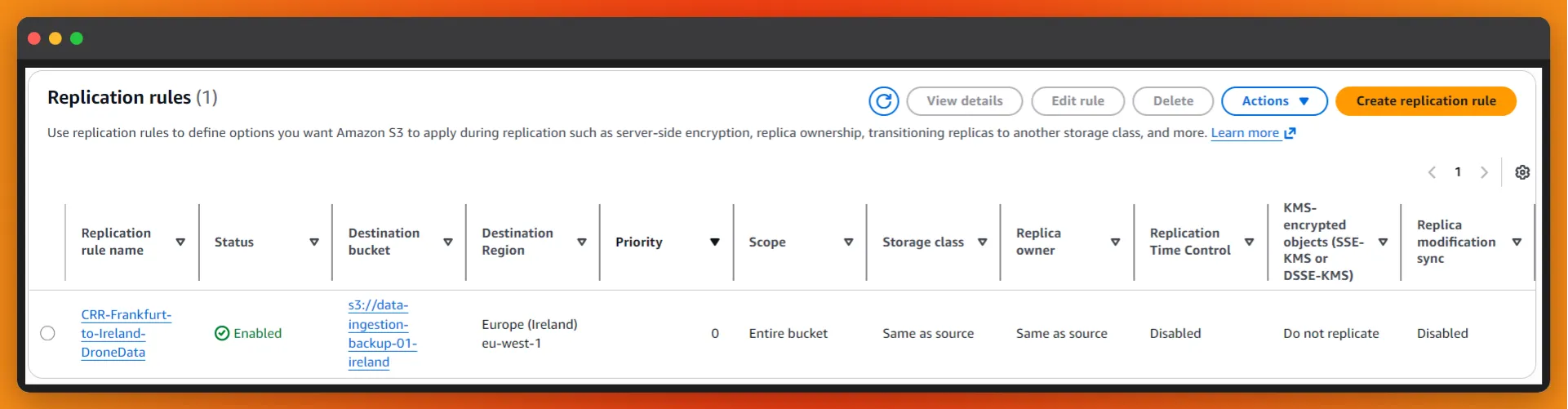

2. Geographic Redundancy (Cross-Region Replication)

To address Business Continuity and prepare for rare but impactful regional outages, I implemented Cross-Region Replication (CRR).

The Strategy: Data ingested in Frankfurt (eu-central-1) is asynchronously replicated to Ireland (eu-west-1).

I specifically chose these regions to satisfy two requirements:

- Compliance: Ensuring data remains within the EU to maintain GDPR compliance.

- Disaster Recovery (DR): Maintaining enough geographic distance to serve as a robust backup site if a primary region faces a service disruption.

Figure 2: Architecture for Disaster Recovery: Synchronizing data across European regions for high availability.

Figure 2: Architecture for Disaster Recovery: Synchronizing data across European regions for high availability.

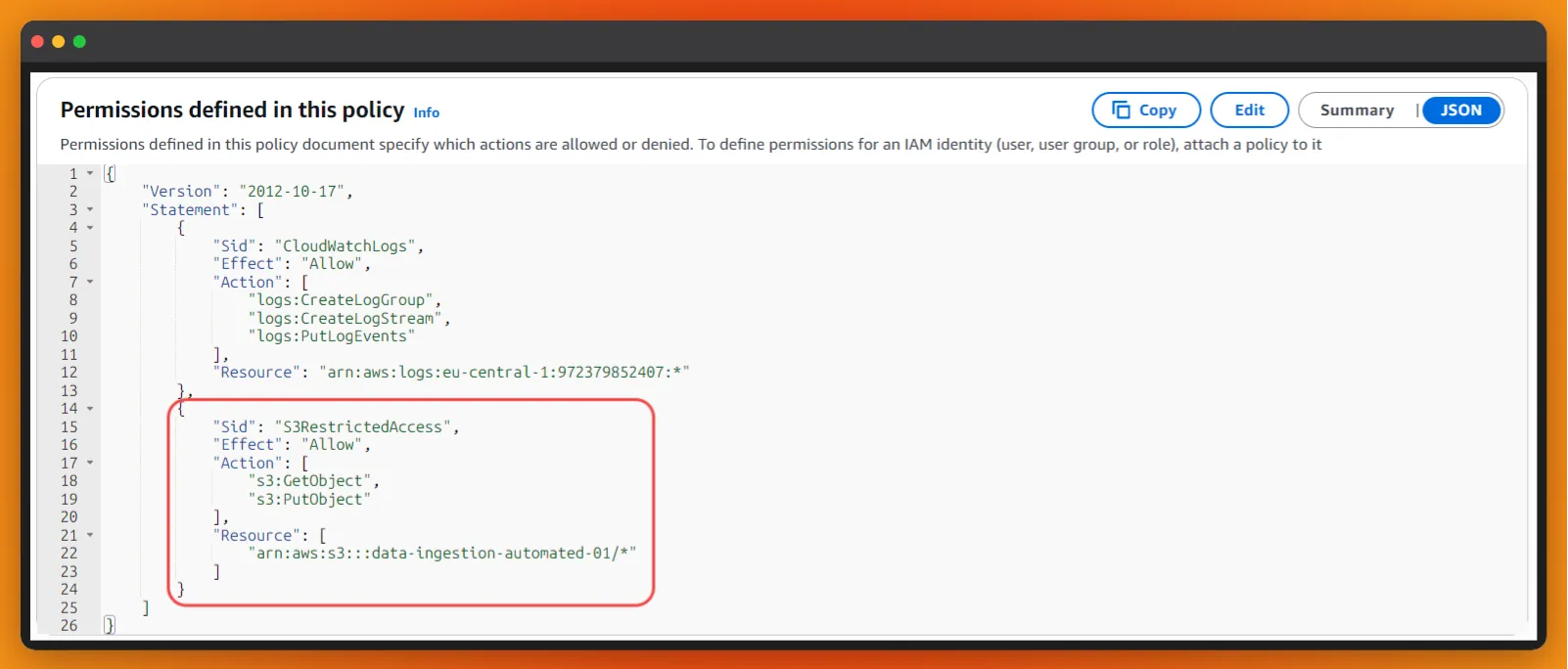

3. Hardening Infrastructure with Least Privilege IAM

In a professional environment, using wildcards (*) in IAM policies is a major security risk. To harden the pipeline, I’ve refactored the execution roles to follow the Principle of Least Privilege:

- Granular Actions: Permissions are strictly restricted to necessary operations like

s3:GetObjectands3:PutObject. - Resource Scoping: Instead of granting access to the entire account, permissions are mapped specifically to the project’s unique ARNs.

This minimizes the “blast radius.” If one component of the pipeline were ever compromised, the rest of the infrastructure and data remains isolated and safe.

Figure 3: Security Best Practices: Defining granular IAM policies to restrict access and minimize potential security risks.

Figure 3: Security Best Practices: Defining granular IAM policies to restrict access and minimize potential security risks.

Conclusion

Resilience is a choice made during the design phase, not an afterthought. By combining Versioning, Replication, and strict Identity Management, we build systems that don’t just process data, but protect it against any eventuality.

A truly professional architecture is one that is invisible when things go right and indispensable when things go wrong.

Is your data pipeline resilient enough for production? Let’s connect to audit your AWS architecture for security and high availability.